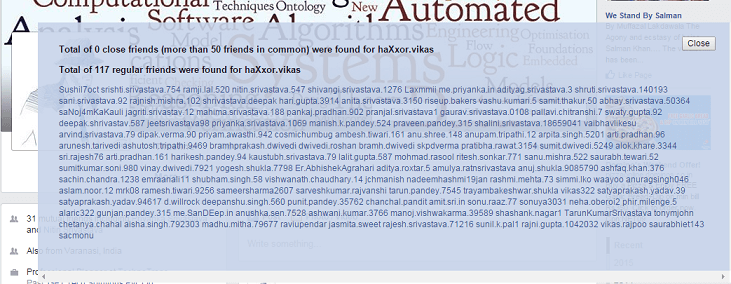

Guillaume Lample, Neil Zeghidour, Nicolas Usunier, Antoine Bordes, Ludovic Denoyer, and Marc’Aurelio Ranzato With only a single GPU and several CPUs, we train an AI for MiniRTS in an end-to-end manner to beat a rule-based system with over 70% win rate.įader Networks: Manipulating Images by Sliding Attributes One game, Mini-RTS, is a miniature version of StarCraft, captures key game dynamics and runs at 165K frame-per-second (FPS) on a laptop, an order of magnitude faster than other platforms. Using ELF, we implement a highly customizable real-time strategy (RTS) engine with several games. Yuandong Tian, Qucheng Gong, Wenling Shang, Yuxin Wu, and Larry ZitnickĮLF is an Extensive, lightweight and flexible platform that supports parallel simulations of game environments. In this paper, we develop state-of-the-art neural models that given an image, a dialog history, and a follow-up question about the image, answer the question.ĮLF: An Extensive, Lightweight and Flexible Research Platform for Real-time Strategy Games We would want the AI to understand this instruction in reference to the earlier conversation. Or consider a human-robot team for search and rescue missions: Human: “Is there smoke in any room around you?” AI: “Yes, in one room.” Human: “Go there and look for people”. We would want the AI to respond naturally and accurately with something like “No, on a mountain.” Or if you are interacting with an AI Assistant, you might say “Can you see the baby in the baby monitor?” AI: “Yes, I can.” You: “Is he sleeping or playing?” and we would want an accurate answer.

The user might then ask: “Great, is he at the beach?”. It would be nice if the AI can describe it, say “John just uploaded a picture from his vacation in Hawaii”. For instance, consider a blind user on social media.

Visual Dialog requires an AI agent to hold a meaningful dialog with humans in natural, conversational language about visual content. Jiasen Lu, Anitha Kannan, Jianwei Yang, Devi Parikh, and Dhruv Batra Facebook research at NIPS 2017īest of Both Worlds: Transferring Knowledge from Discriminative Learning to a Generative Visual Dialog Model Be sure to tune in if you can’t be there in person. Join us on LIVE from NIPS 2017įor the first time ever, we’ll be hosting a Facebook LIVE, and streaming many of the NIPS sessions from our Facebook page starting Monday at 5:30 pm. Our researchers and engineers will also be leading and presenting numerous workshops, symposiums and tutorials throughout the week. Research from Facebook will be presented in 10 peer-reviewed publications and posters. Machine Learning and AI experts from around the world will gather in Long Beach, CA, next week at NIPS 2017 to present the latest advances in machine learning and computational neuroscience.